On-call buddy

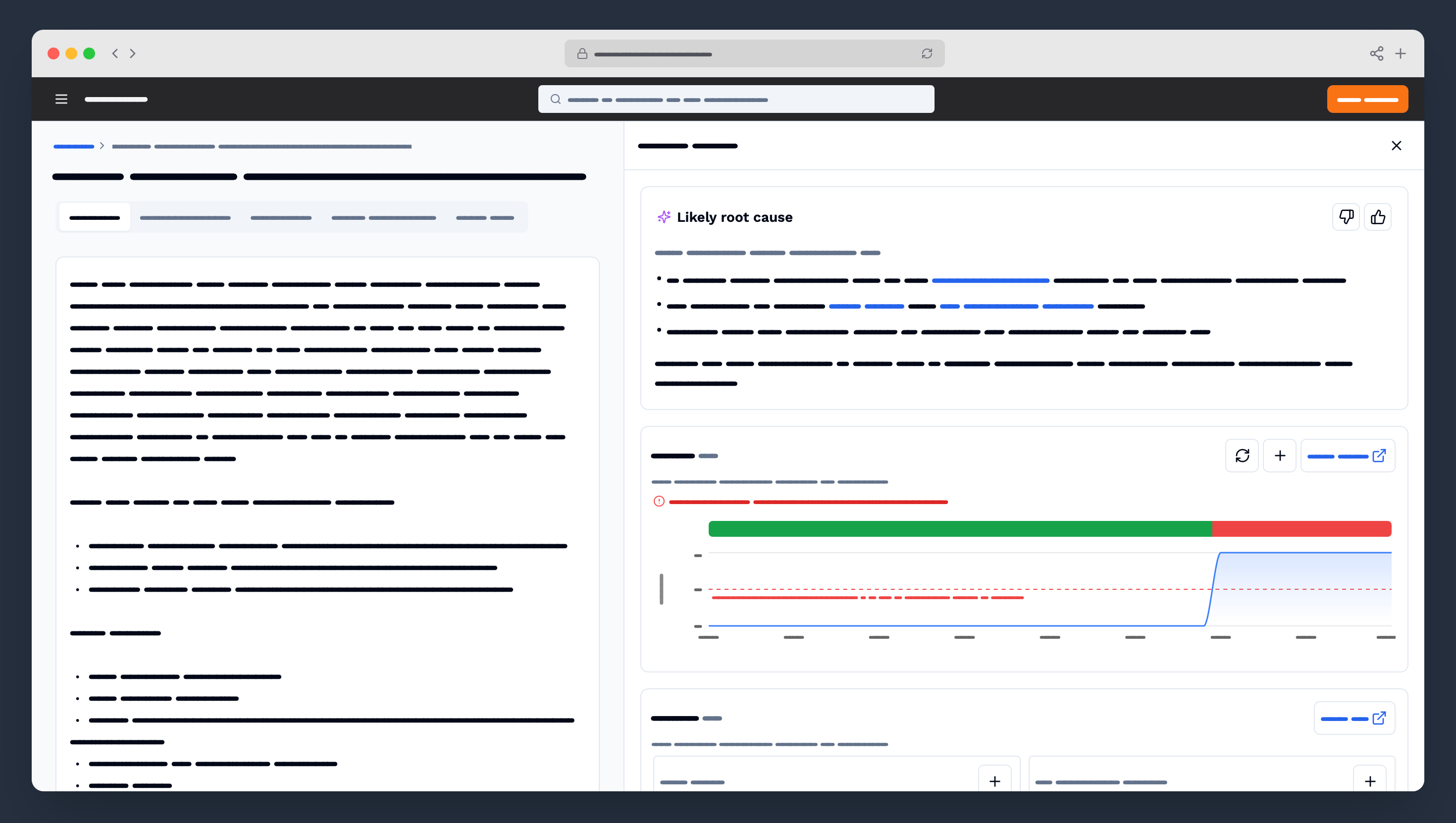

An AI-powered support tool that surfaces relevant resources to help engineers quickly find the root cause of incidents. As Lead Designer, I independently owned the full design arc including research, concepting, AI exploration, and validation while collaborating with Principal Product and Engineering leads to define the vision.

Intro

At the time of this project, Amazon expected all of its 80,000 developers to participate in on-call rotations. When an incident occurred, developers were spending an average of 45 minutes diagnosing the root cause, a process that was both professionally and personally disruptive.

I independently owned this project end-to-end over seven months: from generative research through design delivery and evaluative research. After a literature review, I designed and distributed a 12-question survey to quantify the most difficult and repetitive parts of the diagnosis process at scale. My research highlighted log diving and metrics investigation as the most difficult and most repetitive tasks — and revealed that developers were opening dozens of tabs across different resources, needing to triangulate information to understand the root cause.

Those findings directly shaped the solution: a tool that automatically pulls in the most frequently-used resources and surfaces the most relevant data based on time correlation, giving developers a clear starting point for diagnosis. I validated this concept across two rounds of semi-structured interviews with developers across different organizations and teams. I also explored and validated AI features as part of the near- and long-term product vision, collaborating closely with principal leads across product and development to shape the roadmap.

Two months after launch to ~1,500 beta participants, the tool averaged a 26% reduction in time spent diagnosing root causes (from 45 minutes to 33), with a 58.6% CSAT score and only 14% of participants expressing dissatisfaction. Satisfied users consistently highlighted the tool's ability to quickly surface contextual information, while neutral respondents largely cited limited exposure during the beta window — signaling an opportunity to improve onboarding and feature discoverability.

© Emilie Thaler 2026. Portfolio made with ♥, Kirby CMS, & Claude Code.